Some lessons for components manufacturers that could help them do much better than their competitors.

The electronics industry is the pioneer when it comes to ingredient branding. There have been many great and iconic cases, and there is a lot to learn from these.

The computer industry brought ingredient branding to the forefront. Assembling desktop computers using branded components was a viable way to save money for the end user. An assembler could take a Seagate hard disk, Intel processor, Samsung monitor and Logitech mouse to create a computer as good as an HP or Compaq desktop.

The slow shift to the laptop eliminated the ability to assemble. However, by then, the industry was operating on well-known ingredient brands, processors, hard disks, monitors and mice. Although the assembling industry was not threatening computer brands, the result of this ingredient branding was that there was not much differentiation between an HP or a Compaq or an IBM.

What is an ingredient brand

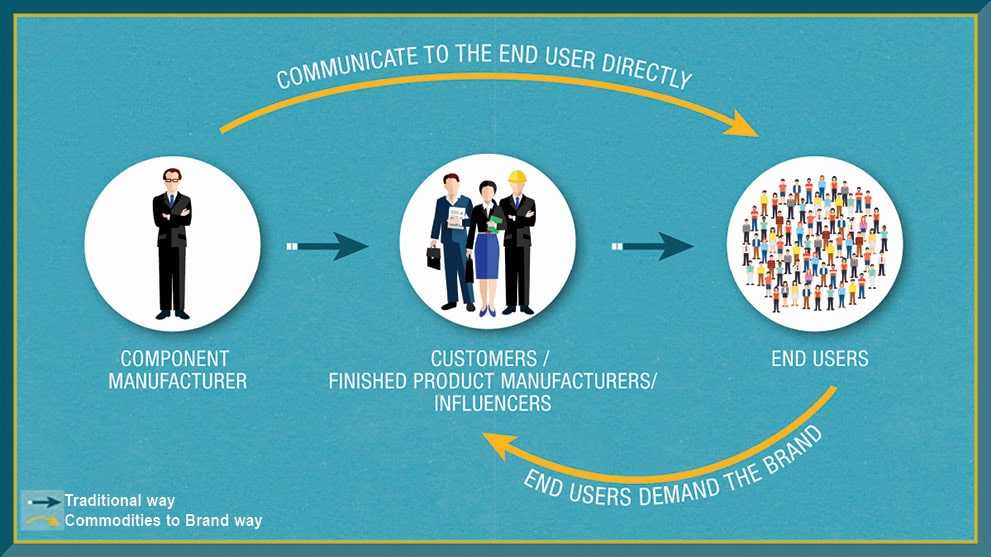

When a component product builds a brand, it is called an ingredient brand. It is not built with the customer as the target. Instead, it is built with the end user in mind. When the end user knows the brand well, it creates pull for the brand.

Many companies feel that if their customers know them and their companies are well known in the industry for providing quality products, they have a brand.

But, I beg to differ. The end user needs to know the company and its products. The company needs to create some pull for its products for it to truly call itself a brand.

Commoditised category

Until the first brand in the component category takes that first step to build a brand, the category is largely commoditised. Now the question is, what is a commoditised category?

It is a category in which no player has built a brand in a significant manner. Players remain largely unknown to the end user. And because of that, no one player can command a price premium for each sale it makes. Players build relationships with their customers by providing good quality at best possible prices, and get sales based on that, rather than the strength of the brands.

Benefits of ingredient branding

An ingredient brand goes into making the finished product. The finished product uses the ingredient brand to be sold, because it adds credibility to its quality. It also allows the finished product manufacturer to charge a premium.

For the ingredient brand, the ability to charge a premium is the single biggest advantage of investing in brand-building. Because the end user desires a finished product that uses its ingredient, it allows the ingredient to charge a premium, which the finished product passes on to the end user.

Another critical benefit for the ingredient brand is not being substituted. Once the finished product starts relying on the ingredient to make a sale, it reduces the ability of the finished product to substitute the ingredient with another component. Some might argue that this is even more important than charging a premium, because it ensures business continuity.

Be the first mover

One critical thing for a component to become an ingredient brand is that it needs to be first. The first player tends to become the market leader and remain so, provided it does not make a hash of its marketing efforts.

The first mover usually is seen as the expert by the end user. Players that follow—yes, they will follow—are seen as me-too players.

The how

Let us now look at how ingredient branding is done, by looking at cases of others who have done it well.

Intel

This is the case of the century with regards to ingredient branding. It is one of the most successful cases ever.

We go back to before Intel was known to the world. By 1990, Intel had launched its 386 chip, which was being supplied to PC manufacturers like Compaq, IBM and HP. But, things started to turn, and Intel started losing market share. It discovered the reason to be Cyrix, another chip maker. Cyrix was reverse-engineering Intel’s chip and selling it for half the price. PC manufacturers were also willing to replace Intel with Cyrix. Afterall, who would not if it meant getting similar quality at a lower price.

But Intel was ambitious. The product it was fighting was a me-too one, and it did not want to battle it out based on price. The decision Intel took changed the computer industry forever.

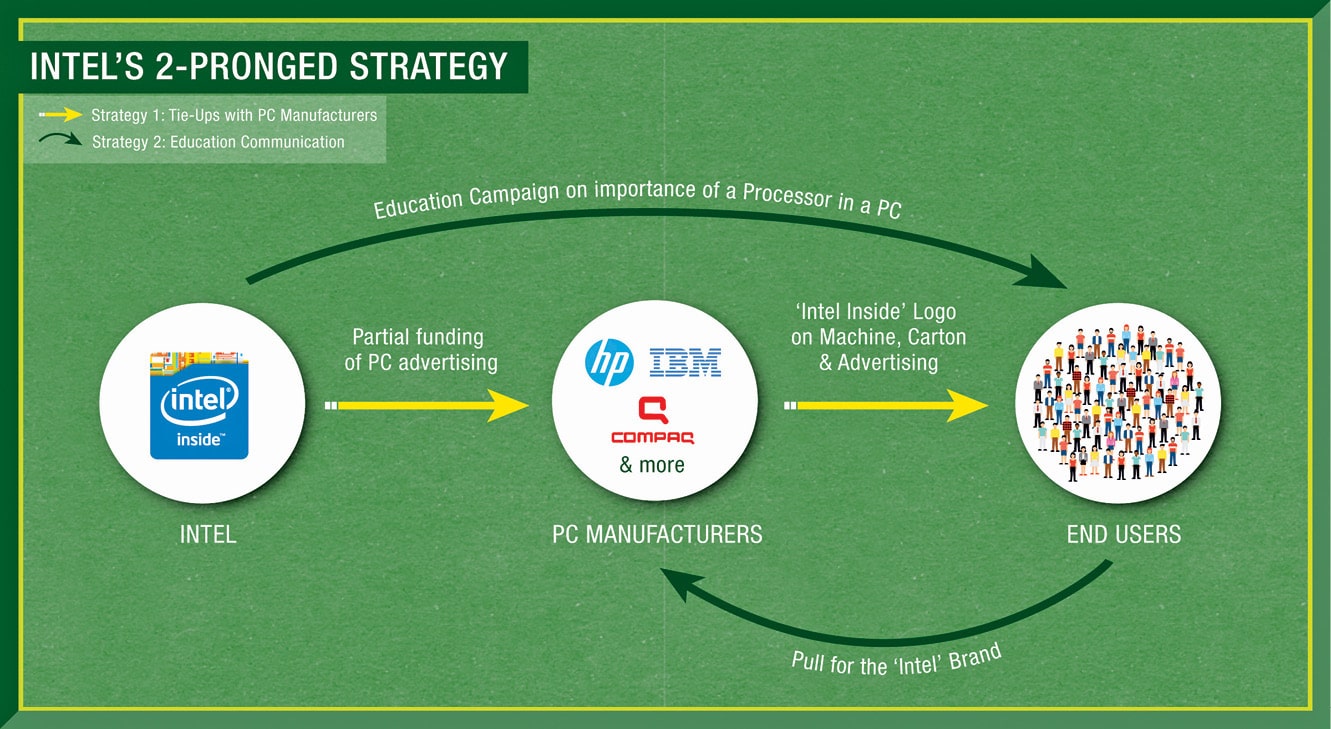

It did two key things. The first was a business model decision. Intel chose to work with its customers differently. It decided to partner with them. This was not just in name, like many customer-centric programmes. Neither was it just smoke and mirrors.

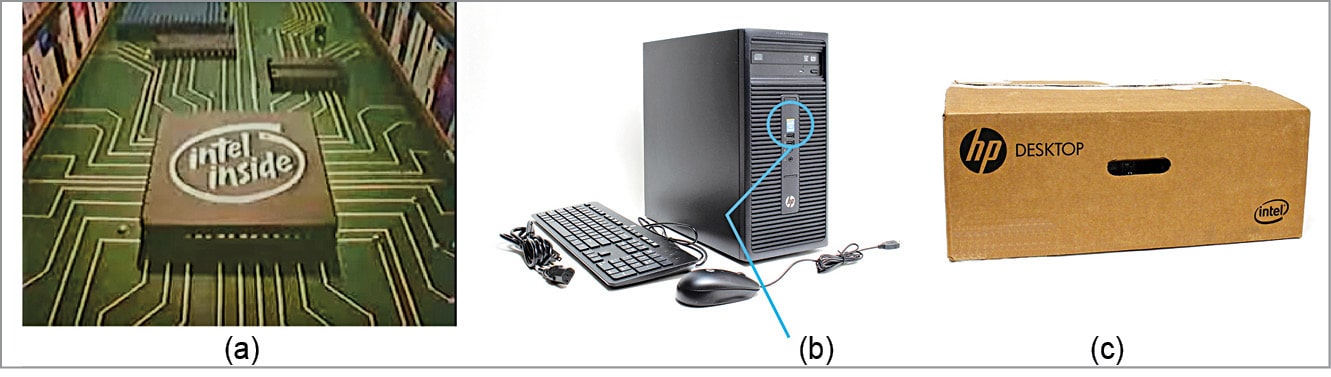

Intel put its money where its mouth was, and took a huge risk. It decided to part-fund its customers’ marketing efforts. In exchange, its customers put their Intel Inside logo on the machine and carton as well as in advertising. This was a mutually-beneficial relationship to build the PC market.

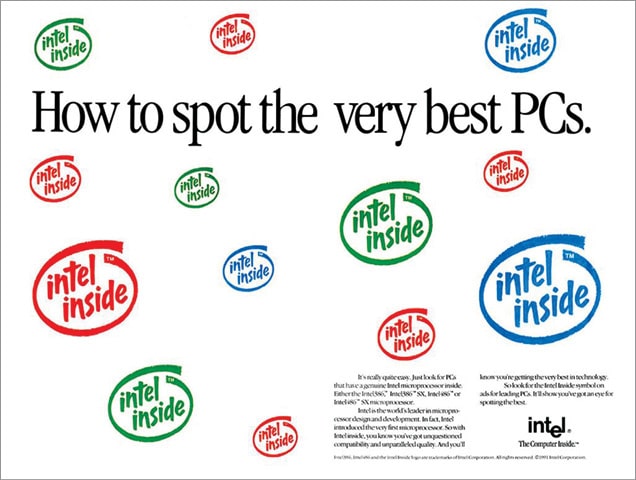

The second was an education campaign with the end user. Intel realised that for the end user what is inside the box was unknown, not understood and probably too technical. So, it decided to educate the end user on the importance of the processor. The campaign it developed had two objectives. The first was to position the processor as the heart of the PC that is responsible for the speed of the PC. The second was the compatibility of the processor with a multitude of software.

Both these efforts helped build a brand for Intel with the end user, thereby creating pull for the brand.

Takeaway. Intel made the end user its target audience. Even though the end user was not buying a processor, it was buying a computer. The education campaign usurped the computer. By calling it the heart of the PC and saying it was responsible for the speed of the PC, it made itself the most critical component of the PC. And over time, more important than the brand of the PC.

Intel did not sign any exclusivity agreement with any one PC manufacturer. That ended up reducing differentiation between PCs. IBMs, HPs and Compaqs all had Intel processors. Because of that, the focus shifted from the PC to the processor.

The brand soon started charging 400 per cent premium over Cyrix. It got back the market share it had lost. Overall, Intel has dominated the PC market ever since.

Dolby

When we think of Dolby, we think of incredible sound. It is everywhere, including films, TVs, cellphones and so on. But there is a lot to learn about how a company can get to this point—the things it did right and what it did not.

Ray Dolby, who died in 2013, was an engineer by profession who dabbled in sound technology even before he founded the company. The first technology he came up with was a first-in-the-world one. He figured out how to reduce the hiss that happens while recording sound. This he called noise reduction (NR).

That was the beginning of an incredible journey. Dolby first got sound studios in England to adopt NR. Soon, US sound studios followed suite. He rode the whole wave of cassette tapes and tape players. He moved into providing NR sound for movie theatres.

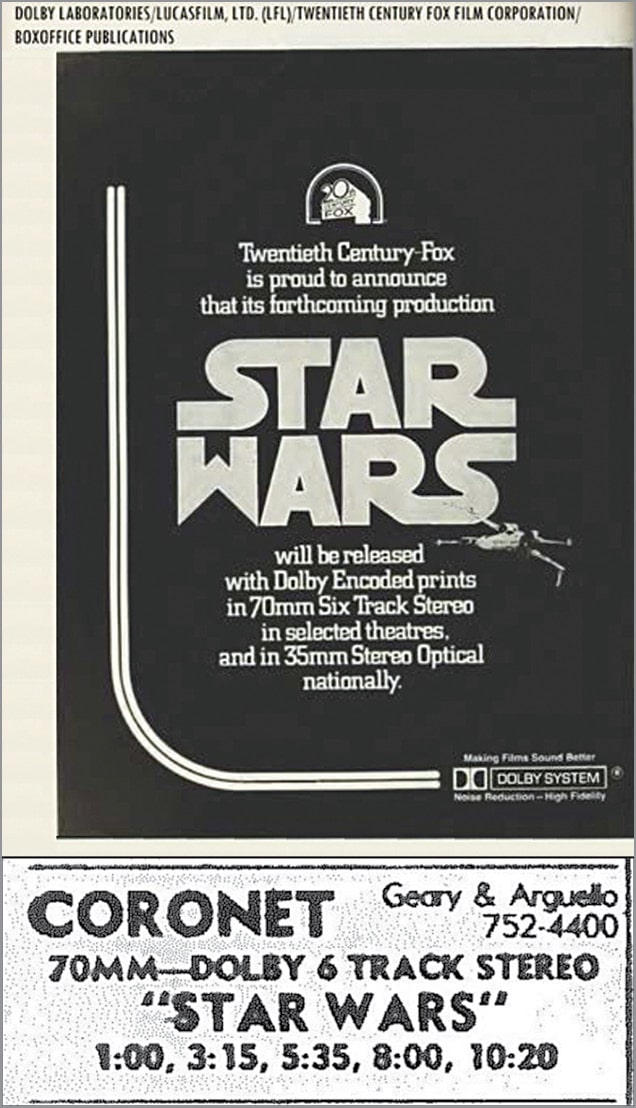

At the very beginning, Dolby decided it would manufacture professional products only and license technologies appropriate for consumers. But the big inflection point came when Star Wars used Dolby to provide sound for the film. Even though state-of-the-art sound was being used in albums and concerts, in movies it was a commoditised category. Stephen Katz, sound consultant from Dolby who worked on Star Wars, said, “Sound was a very important component for Star Wars, and it was a component that Hollywood virtually ignored. People used to tell me, ‘Nobody listens to the sound.’” Well, not until George Lucas decided to change it. It is his vision that Dolby brought to life.

None of the movie theatres were fitted with Dolby sound, but Twentieth Century Fox insisted that if they wanted the 70mm print (vs the 35mm), they needed to install Dolby.

And because very few movie theatres had made that shift, initially Star Wars released with only 40 prints as against the usual 800 prints. And, only three prints had Dolby sound.

The film created such magic that things took off from there. Movie theatres clammored to have Dolby sound. Without Star Wars, Dolby would not be where it is now. Also, without Dolby, Star Wars would not be as magical, either. Sound added a lot to the film. So much so that the industry henceforth looked at sound differently. It was no longer a commoditised product.

Dolby’s journey did have a hiccup when it did not realise the impact of digital audio on cinemas. Sound until now, although of excellent quality, was analogue. And, an entrepreneur, Terry Beard along with Steven Spielberg, Universal Studios and other investors created Digital Theatre Systems (DTS). Dolby lost out and took a few years to gain back the leadership, which it determinedly did.

Dolby’s business focus has been innovation and patent protection. It has made most of its money through licensing. As of 2018, it still makes 90 per cent of its revenue through licensing, and 10 per cent through products and services.

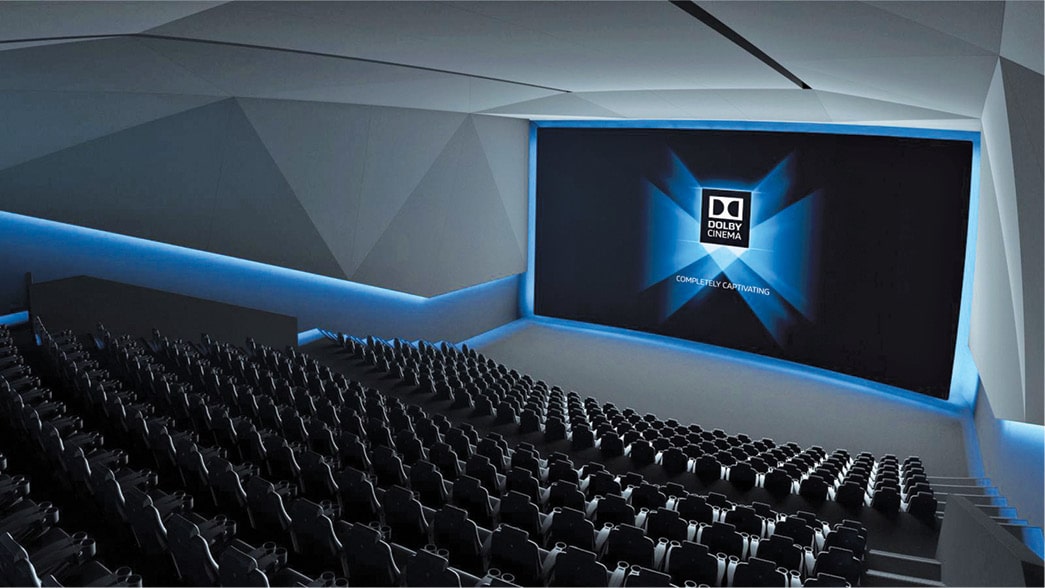

Now, Dolby has proliferated every aspect of our lives. The movie theatre product is Dolby Atmos, which it has moved beyond audio into video by adding Dolby Vision.

Its home theatre product is Dolby Digital Plus. Dolby is working with companies to provide its products on smartphones, TVs, games, tablets, streaming services, headphones, movie theatres, sound studios and more.

Takeaway. Dolby is a company that has innovated consistently to improve its own products. It has worked to make itself redundant, so no one else can. This focus on innovation has meant that it has rarely needed to rely on buying technology from outside the company. The decision to focus on top-end professionals and leave the rest to licensing ensured a continuous inflow of funds, so much so that it did not feel the need to go public until 2005. Its relentless focus on patenting has resulted in it having 8100 issued patents and 4200 pending patents in 100 countries, as of 2017.

Dolby also works with its customers to ensure there are no design mistakes in its products and that it is of high quality. One of the key decisions that Dolby has taken is to keep Dolby licensing affordable, so companies would rather use the technology than not. This has made the technology accessible and available everywhere, and kept competition out to a large extent. This has prevented it from being substituted by DTS. Even though it lost out to DTS initially, it managed to make a comeback.

The bottom line

Both products, before they decided to build an ingredient brand, belonged to commoditised categories. It always takes a bold mindset on someone’s part to make moves like these. Dolby and Intel both built their brands with the end user of the finished products that their products go into, so that there is pull for the brands.

Intel provided funds to its customers’ marketing budgets, which built loyalty and prevented substitution.

And, Dolby made licensing affordable, and made sure the technology was great. This is what prevented others from dominating and for them to come back in the race.

Principles followed by the cases in this article can be used by many businesses:

- To create partnerships with customers

- To focus on product—innovating to make yourself redundant, so no one else does

- To recognise the stage your product is at, and identify whether education is needed or competitive communication

- To look out for new technologies and to not miss them out, like Dolby did with digital

- To use topical happenings or big moments in your industry to your advantage, as these can be big inflection points in your industry—the way Dolby did with Star Wars

NVIDIA and the GPU

NVIDIA is synonymous with gaming. And for good reason! It was established in 1993 by Jensen Huang, Chris Malachowsky and Curtis Priem. This was only three years after Intel did its Intel Inside campaign that sky-rocketed its image. NVIDIA’s journey is different, but it came from being a niche to now being more mainstream.

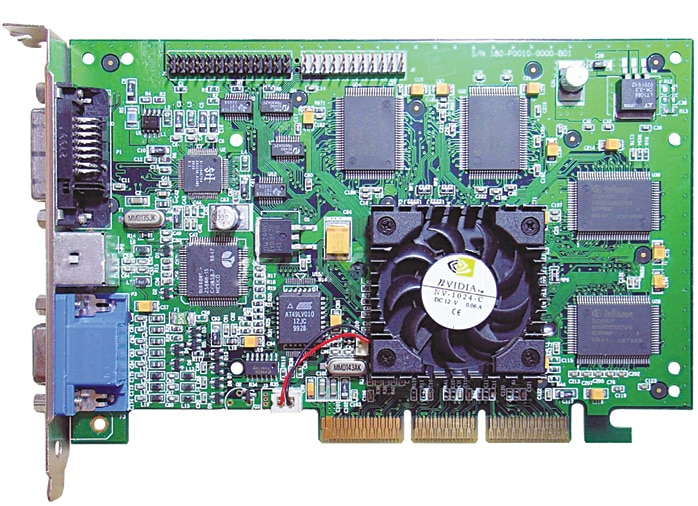

In 1995, NVIDIA first launched a PCI card with inbuilt 2D and 3D graphics. This was a commercial failure that forced it to regroup. It came up with a strategy based on its understanding of its impatient consumers, who wanted the best technology sooner than the current 18 months market average. Hence, it decided to launch a new chip every six months. This turned out to be a watershed moment for the company.

From this strategy emerged NVIDIA’s first processor in 1997, called Riva 128. This was a big success, selling a million units in four months. Then in 1999, it came up with a game changer, the graphics processor unit (GPU) called GeForce 256.

So what is the difference between a GPU and a CPU (central processing unit)? Well, a GPU is a processor that renders images, video and animations for the computer screen. It takes the load off the CPU, as it does the rendering instead. But the great thing about a GPU is that it performs parallel functions—something a CPU is not designed for. And these parallel functions are needed in gaming to provide zero lag and better refresh rates.

Connecting with gamers

What better proof can there be that the product is of such great quality that Microsoft decided to use NVIDIA GPU for its first Xbox launch? Sony PS3 followed in 2005. Soon, all game creators started using NVIDIA gaming platform. By 2016, more than 100 game creators were using it. Not just that, all Hollywood movies with great graphics and animation in the last few years have been created on machines with NVIDIA GPUs.

Being the brand of choice for Xbox and PlayStation as well as the brand that helped deliver Oscar-winning movies are some of the key ways NVIDIA became synonymous with gaming. This is proof of the product, its capability and performance, which gamers see every day as they get to enjoy the latest graphics on Xbox or PlayStation.

However, NIVDIA was not happy just to provide proof to its consumers. It needed to connect with them, too. So it used social media in a big way. It has a blog that features all the latest that is happening at NVIDIA and the same content is shared on all social media platforms. YouTube not only showcases NVIDIA’s latest product offerings, but also features user-generated content in the form of reviews by tech influencers or gaming simulations. In fact, NVIDIA has a strong network of tech influencers that it keeps happy for getting positive reviews on its products.

Another key effort to connect with gamers is gaming conferences, such as Games Developers Conference (GDC) in the US and GamesCom in Germany, Europe. NVIDIA puts up great performances at these conferences, often unveiling new launches and always showcasing new technologies.

GPGPU is the future

What happened in the last few years has made things interesting. GPUs can now be used for general-purpose computing, or what are also called GPGPUs. Because the GPU can carry out many parallel functions, it has the potential to someday replace the CPU.

But that is not something Intel will allow so easily. In fact, there have been legal battles that Intel has fought with NVIDIA, AMD and Federal Trade Commission (FTC), where Intel had to settle and pay. (The FTC is there to protect the consumer and to prevent anti-competitive activities by companies.)

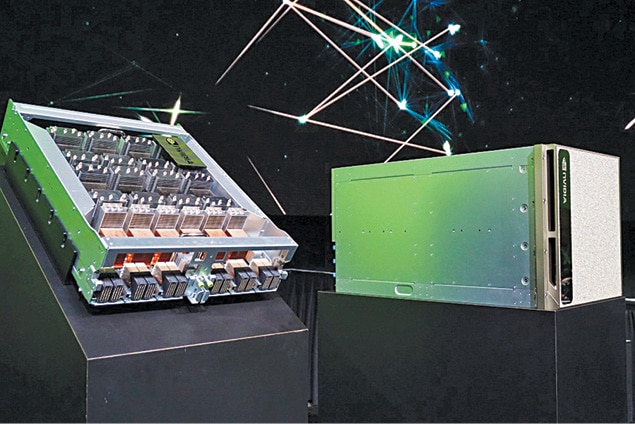

Since a GPU can carry out some of the functions of a CPU and, at multiple times, its power, some interesting uses have come up. This is a key reason the artificial intelligence (AI) revolution has gone into overdrive—creation of super computers with tremendous processing power. And, a GPU is basically a super computer processor.

Smart cities is one such use case. Cameras placed all across the city capture a lot of data, which GPUs analyse. This helps with traffic and parking management, law enforcement and city services, among others.

In healthcare, GPUs can help with medical imaging, analysing genomes and so on.

NVIDIA recognises this as a breakthrough phase. Academicians are pioneering scientific discoveries. They need computing power for their experiments and discoveries. Hence, NVIDIA is convincing these universities to adopt this computing power.

Retail is using AI to optimise the supply chain and user data to increase conversion, provide customised shopping experiences, micro-targeting/pricing and in other areas.

AI touches all aspects of self-driving cars—be it safety, driver alertness, easing traffic congestion or designing new products. NVIDIA is being generous with its technology. It is not only working on its own self-driving technology, but is also working with companies like Uber, Baidu, Toyota, Mercedes-Benz, Audi, Volkswagen and Tesla. Clearly, the company believes that partnering is the way forward.

A big thrust area for NVIDIA moving forwards is super computers, which are driving cloud services. All of these are giving up using CPU servers and are moving to GPU ones, including Amazon, Microsoft, Google and Baidu.

Let me give you an idea of how much more powerful a GPU server is in comparison to a CPU server. One GPU server can replace 160 CPU servers. And when you interconnect multiple GPUs, a speed of two petaFLOPS (for the interested, that is 1015 FLOPS) can be achieved. These computers are needed to solve the most complex AI challenges of the future and, frankly, it is mind boggling where GPUs are taking the power of computing.

Takeaway

Understanding market dynamics and consumers is critical. This is what led to NVIDIA’s success over other GPU players. Its strategy to continuously provide upgrades at six-month intervals and keep innovation as its core propelled its growth. It pushed its product designs to maximum technical limits, always providing the best end-user performance.

NVIDIA chose its niche well—gamers—too. This audience was smaller than the main consumer market for PCs, but it was knowledgeable, and that kept NVIDIA at the cutting edge of technology. NVIDIA found the right forums to connect with them, either at conferences or through social media, and used tech influencers well. But perhaps the best is yet to come! The age of super computers and super computer processors has put AI into overdrive, and NVIDIA is at the centre of this revolution that has already begun. Will it be the next Intel?

Zeiss

Zeiss has been the camera of choice for generations. It was the ingredient brand for Nokia phones in the 2000s as well, but that was possible because of Zeiss’ beginnings. Carl Zeiss opened his workshop in 1846 and focused on making scientific instruments and lenses. He developed the famous Jena optical glass.

By 1861, Zeiss was considered one of the best in Germany. By the end of WWI, it was the world’s largest camera production company. And in 1969, Zeiss Biogon lenses were used in Apollo II mission to the moon by NASA. That is the heritage of Zeiss. Always considered a pioneer and leader in lenses!

It is not that Zeiss has had no competition over the years. It has just been more focused on delivering to consumer needs, on keeping up with the market. Over the years, it built a brand. It developed and released campaigns, showcasing new lenses and the picture quality from Zeiss lenses.

Zeiss also entered into collaborations and licensing agreements with other companies like Hasselblad, Rollei, Yashica, Sony, Logitech and Alpha. It either provided its brand for co-branding of lenses that were developed by other companies, or licensed its technology to them for complete optical design and manufacturing.

Zeiss and Nokia

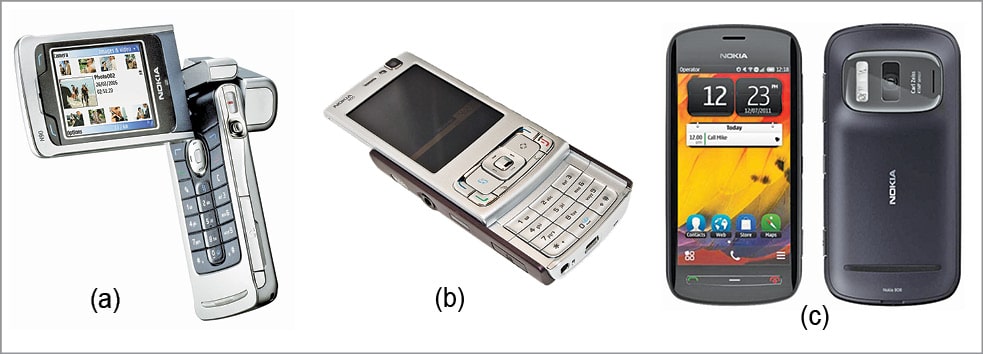

Zeiss entered the cellphone camera market with Nokia, which had, by 2004, become the world’s most sold cellphone brand. And yet, Nokia did not have the best phone camera. Sony Ericsson did. So Nokia roped in Zeiss to develop a lens for its cellphone. The result was N90, which was launched in April 2005 with 2MP camera by Zeiss.

The partnership produced a series of phones and had a steady hold over the consumer market, including Nokia N95 with 5MP camera.

The milestone of Nokia and Zeiss partnership was the launch of Nokia 808 PureView in 2012. The highlight was the name of the phone, which was solely based on the imaging quality of the phone camera.

What brought Nokia down was the launch of Apple’s iPhone and the multitude of Android phones. Both were software that were far superior to Nokia’s Symbian. Even Microsoft buyout did not help, because the launches post this were using the not-so-popular-for-cellphones Windows platform.

Recently, Nokia made an attempt to come back on Android platform—Nokia 8—again with Zeiss lens. At last count, early this year, it had managed to gather a little over one per cent of the global cellphone market. While this is a far cry from its earlier high, it is not a total dud!

Nokia recently launched Nokia 6.1 and 5.1. And while Apple and Android are far ahead, we will have to wait and watch to see if this attempted comeback by Nokia goes anywhere.

Zeiss and Sony

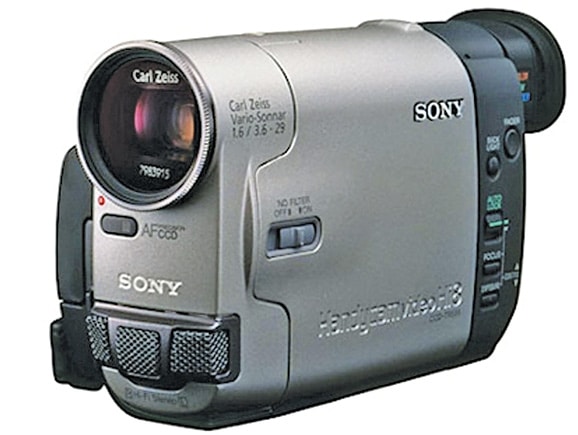

Well before the Nokia partnership, there was the Zeiss-Sony one, which commenced in 1995. It is another great example of ingredient branding. Because here too, just like Nokia, Sony was not well-known for optics and photography. However, since Sony was a reputable name with consumers, it used the expertise of Zeiss to launch a range of camcorders and digital cameras.

The first product launch of this partnership was Sony Handycam CCD-TR555. The partnership has also launched lens-style cameras that can be attached to smartphones.

Zeiss supports Sony throughout the optical design and development process. It then tests and approves prototypes. It also develops 25 of its own distinctive lenses that perfectly fit Sony cameras. This is how symbiotic this partnership is, and an extremely successful one too. They celebrated 20 years of this partnership in 2015, and are still going strong. They have sold more than 185 million products through this collaboration, which continues even today.

Looking forward

Zeiss, in the meantime, is not depending much on Nokia and Sony. It has the following four focus areas:

- Semiconductor manufacturing technology that is enabling the manufacture of powerful microchips

- Medical technology, like products and solutions for ophthalmology, neurosurgery, ENT surgery, dentistry and oncology

- Precision equipment and microscope systems to ensure even the tiniest structures and processes become visible

- Eyeglass lenses for movie and camera lenses, binoculars, etc

Zeiss is not only exploiting its core strength of lenses but, has taken that expertise into other industries as well.

Takeaway

From its inception, Zeiss has kept pace with the market, understood its consumers and been an innovator. It has had many firsts over the years. It has partnered with other camera companies like Hasselblad and Sony, which ensured a consistent delivery of quality lenses.

Building a brand early on, and consistently, is critical to the longevity of a business. Zeiss ensured that it survived when its competitors failed and positioned itself perfectly to be the ingredient brand in one of the biggest booms of camera phones.

The bottom line

A few key principles that can be taken away from these cases are:

- Speaking to a niche audience that is knowledgeable and impatient has advantages for a company; it is pushed to the limit when it comes to innovation. The desire to surprise its consumers can be a great motivator for a company.

- Partnering is a critical step to stay at the cutting edge. Zeiss did it for the longest and so did NVIDIA. Their partnerships include licensing, sharing of knowledge, technology and know-how.

- Building a brand is critical with the end-user. This creates pull for the brand, and big players like Xbox, PlayStation and Nokia rely on the brand to build their expertise and loyalty with their customers. It allows them to charge a premium with the end-user partly. This is a great position to be in for any company.

I hope this article inspires Indian ingredient brands for Indian and global markets—principles are quite the same wherever you may be. As long as you invest in development and choose your segment wisely, there are opportunities out there waiting to be either created or grabbed.

Abhimanyu Mathur is executive vice president, Lowe Lintas (part of MullenLowe Lintas Group)

Abhimanyu Mathur is executive vice president, Lowe Lintas (part of MullenLowe Lintas Group)